Yahoo update beefs up on authority sites

Aaron Wall posted a blog about how Yahoo!’s recent algorithm update has apparently increased weighting factors for links and authority sites.

Predictibly, a number of folx have complained in the comments added to Yahoo’s “Weather Report” blog about the update. Jeremy Zawodny subsequently posted that their search team was paying close attention to the comments, which is always nice to hear.

Coincidentally, I’d also just recently posted about Google’s apparent use of page text to help identify a site’s overall authoritativeness for particular keywords/themes.

As they say, there’s nothing really new under the sun. I wonder if the search engines are all returning to the trend of authority/hub focus in algorithm development? It’s a strong concept and useful for ranking results, so the methodology for identifying authorities and hubs is likely here to stay.

Possible Related Posts

Posted by Chris of Silvery on 07/20/2006

Permalink | |  Print

| Trackback | Comments Off on Yahoo update beefs up on authority sites | Comments RSS

Print

| Trackback | Comments Off on Yahoo update beefs up on authority sites | Comments RSS

Filed under: General, Yahoo Algorithms, Authoritative-Hubs, authorities, Google, search-engine-algorithms, search-engines, SEO, Yahoo

Google Sitemaps Reveal Some of the Black Box

I earlier mentioned the recent Sitemaps upgrades which were announced in June, and how I thought these were useful for webmasters. But, the Sitemaps tools may also be useful in other ways beyond the obvious/intended ones.

The information that Google has made available in Sitemaps is providing a cool bit of intel on yet another one of the 200+ parameters or “signals” that they’re using to rank pages for SERPs.

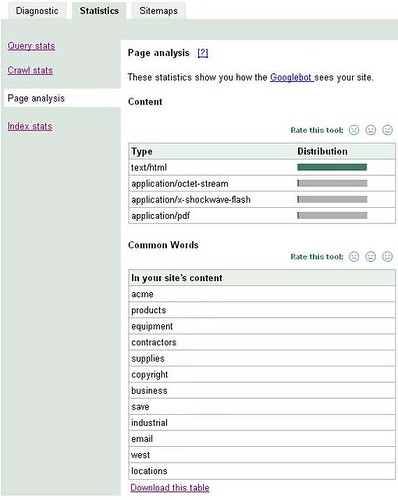

For reference, check out the Page Analysis Statistics that are provided in Sitemaps for my “Acme” products and services experimental site:

It seems unlikely to me that these stats on “Common Words” found “In your site’s content” were generated just for the sake of providing nice tools for us in Sitemaps. No, the more likely scenario would seem to be that Google was already collating the most-common words found on your site for their own uses, and then they later chose to provide some of these stats to us in Sitemaps.

This is significant, because we’ve already known that Google tracks keyword content for each page in order to assess its relevancy for search queries made with that term. But, why would Google be tracking your most-common keywords in a site-wide context?

One good explanation presents itself: Google might be tracking common terms used throughout a site in order to assess if that site should be considered authoritative for particular keywords or thematic categories.

Early on, algorithmic researchers such as Jon Kleinberg worked on methods by which “authoritative” sites and “hubs” could be identified. IBM and others did further research on authority/hub identification, and I heard engineers from Teoma speak on the importance of these approaches a few times at SES conferences when explaining the ExpertRank system their algorithms were based upon.

So, it’s not all that surprising that Google may be trying to use commonly-occuring text to help identify Authoritative sites for various themes. This would be one good automated method for classifying sites for subject matter categories and keywords.

The take-away concept is that Google may be using words found in the visible text throughout your site to assess whether you’re authoritative for particular themes or not.

Â

Possible Related Posts

Posted by Chris of Silvery on 07/11/2006

Permalink | |  Print

| Trackback | Comments Off on Google Sitemaps Reveal Some of the Black Box | Comments RSS

Print

| Trackback | Comments Off on Google Sitemaps Reveal Some of the Black Box | Comments RSS

Filed under: Google, Tools Algorithms, Authoritative-Hubs, ExpertRank, Google, Hubs, Keyword-Classification, On-Page-Factors, PageRank, Search Engine Optimization, SEO, Sitemaps